The race is not slowing down! We continue to witness a great dynamic with new LLMs versions and releases every day!

The aim is to reach the smartest, quickest, or cheapest LLMs, or may be all of that together.

We have recently seen Gemini 1.5 and Claude 3 providing models with bigger context window, and beating GPT4 on certain metrics.

Anthropic’s Claude 3 Beats GPT-4 Across Main Metrics

Claude 3 vs GPT4 : Smarter, Safer, Cheaper

Now, Databricks are becoming more serious about LLM.

They’ve come out with DBRX, a new kind of AI that’s changing the game.

Indeed, DBRX is Open Source. Databricks is sharing it with everyone. You can check the codebase repo here :

GitHub – databricks/dbrx: Code examples and resources for DBRX, a large language model developed by…

Code examples and resources for DBRX, a large language model developed by Databricks – databricks/dbrx

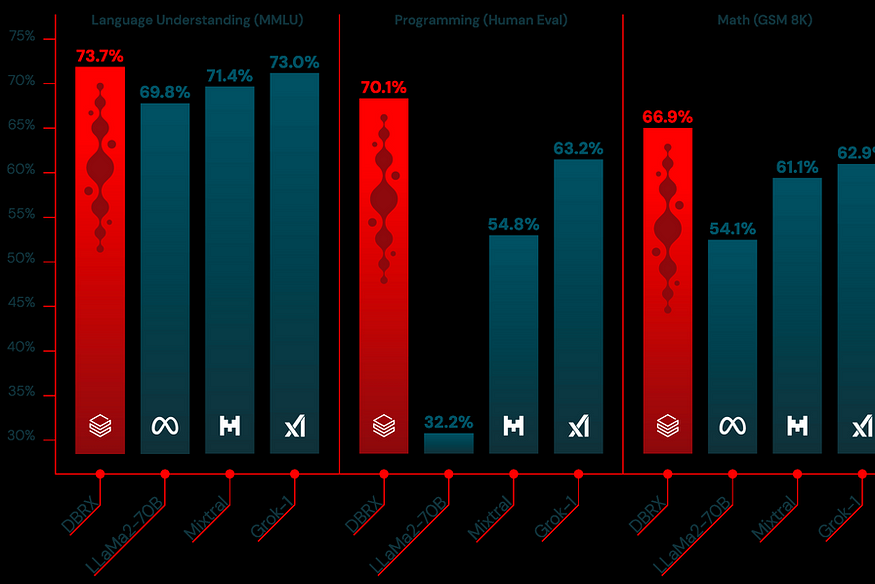

And here comes the interesting part! Among Open Source available LLMs, it beats the most know one! Here is the benchmark!

But it also beats GPT3.5 on some areas ! Check the benchmark here:

DBRX has been trained on a massive scale, using 3072 of the latest NVIDIA H100 GPUs for two whole months. Think about that. It’s a lot of power, a lot of time, and a lot of smarts. This isn’t just a small step forward. For the first time, something this powerful is open for anyone to use and build upon.

What makes DBRX special isn’t just how smart it is. It’s how it’s made. This AI uses something called a “mixture of experts” model. Imagine having a team of experts where only the ones who know the topic best step up to answer. That’s how DBRX works, but with 132 billion parameters, it’s like having an endless team of experts. Yet, it cleverly uses only 36 billion of those at any time, making sure it’s fast and efficient. For your reference you can check the details of the model here : MegaBlocks.

In terms of speed, DBRX beats many other models, with demos showing around 130 tokens/second generation speed. On comparable hardware, Llama 2 was only at 80 tokens/second.

However, DBRX is a heavyweight. You need at least 320 GB of memory to run it. So, not everyone can use it straight out of the box. However, Databricks has made it as easy as possible to get started.

The impact of DBRX going public is non-negligeable. For businesses, it means being able to build smarter AI tools without starting from scratch.

It is worth noting however that DBRX does not “beat” premium models like GPT-4, Claude 3, nor Gemini 1.5, even if it does really well compared to their previous versions, GPT-3.5, Claude 2 and Gemini 1.

In simple terms, Databricks’ release of DBRX is a big moment for AI. It’s not just about making machines smarter; it’s about making advanced AI more accessible. It’s a tool that invites everyone — big companies, small startups, and curious minds — to join in on the AI revolution. (If) The future of AI is open, DBRX is now leading the way. Let’s see where this journey takes us.

Sources

[1] Databrick announcement of DBRX in Databricks blog : https://www.databricks.com/blog/introducing-dbrx-new-state-art-open-llm

Other related articles you might like :

Accelerating AI & LLM Projects: Top 18 Repositories for Developers and Entrepreneurs

A compilation of major repositories to speed up your product development in AI and LLMs

If you found this article useful, please clap and share your thoughts.

I regularly write about AI and Data, feel free to follow me :

- On Medium : https://medium.com/@AhmedF

- On LinkedIn : https://www.linkedin.com/in/ahmedfessi/

- On Twitter : https://twitter.com/ahmedfessi

- On Udemy (my courses are also available on Udemy for Business): https://www.udemy.com/user/ahmedfessi/